Portable Somatic Wearable: AI-Assisted Reflection Through Gaze-Derived Somatic Markers

DOI: https://doi.org/10.1145/3772363.3799134

CHI EA '26: Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems, Barcelona, Spain, April 2026

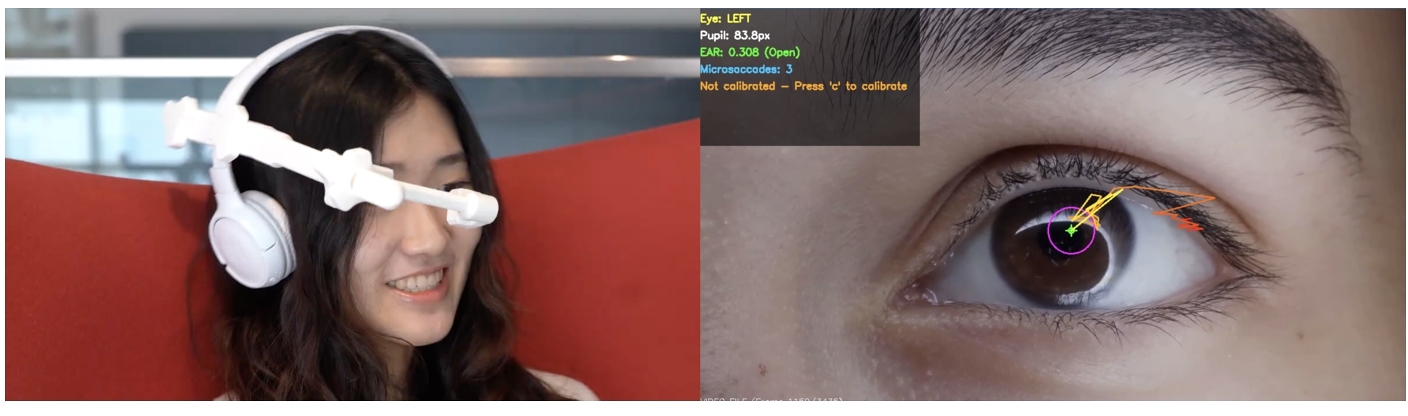

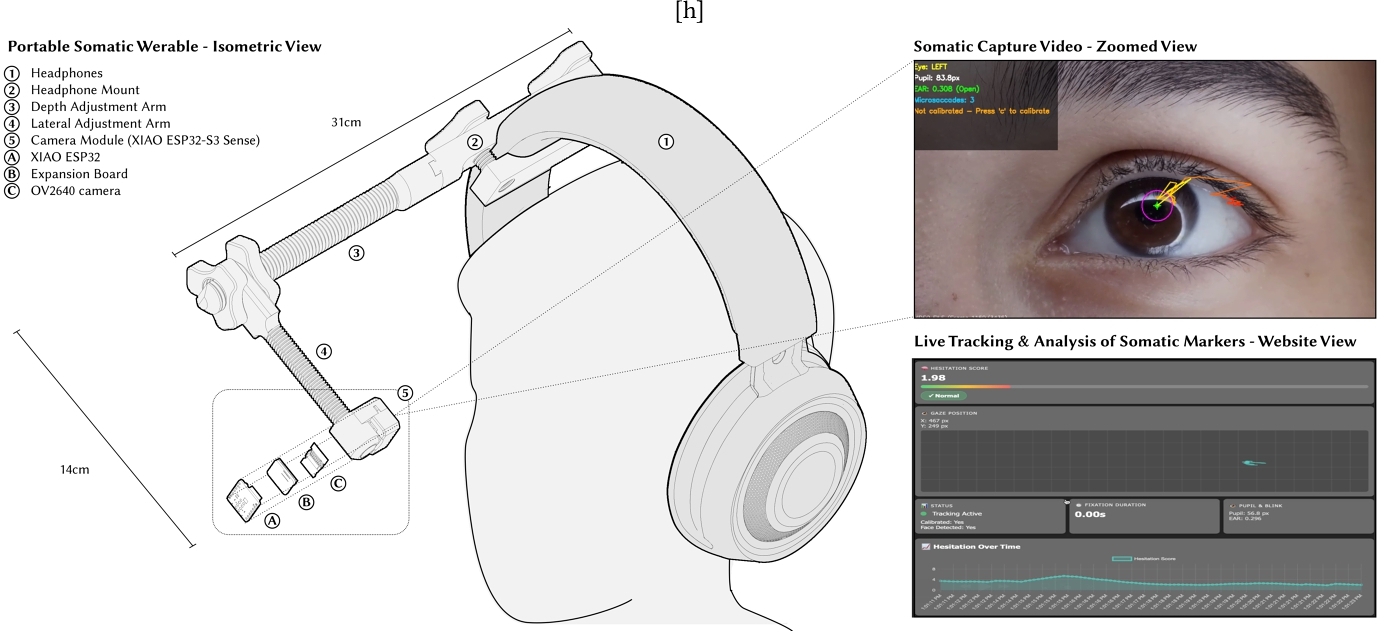

AI-assisted reflection systems are increasingly common, yet most remain primarily linguistic, overlooking the embodied and pre-cognitive signals through which people experience and silently express affect. We present the Portable Somatic Wearable, an interactive demo that enables attendees to engage in a short AI-guided voice reflection while gaze-derived somatic markers shape the conversation in real time. The system captures nonverbal cues such as hesitation, fixation dynamics, and temporal pacing, feeding these markers back into the AI facilitator to adapt conversational pacing and supportive prompts. Addressing limitations observed in our prior Gaze to the Stars installation, the Portable Somatic Wearable enables rapid setup and repeatable deployment through a compact, accessible headphone-integrated sensing module. We contribute (1) a portable sensing platform for repeatable gaze-based somatic capture and (2) an integrated somatic-reactive pipeline that uses these markers to adapt AI interaction, offering a path toward more accessible, socially attuned and humane AI-mediated reflection systems.

ACM Reference Format:

Saetbyeol LeeYouk, Sergio Mutis, Yuxiang Cheng, and Behnaz Farahi. 2026. Portable Somatic Wearable: AI-Assisted Reflection Through Gaze-Derived Somatic Markers. In Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems (CHI EA '26), April 13--17, 2026, Barcelona, Spain. ACM, New York, NY, USA 5 Pages. https://doi.org/10.1145/3772363.3799134

1 Introduction

AI companions are increasingly used to support reflection, emotional processing, and self-understanding through dialogue. Yet most AI-mediated reflection systems remain primarily linguistic: they attend to what people say, while overlooking how experience is often first registered through the body. Long before experience becomes language or interpretation, the body reacts—a held breath, a moment of stillness, a glance averted or sustained. Such pre-cognitive signals orient attention, signal resistance or care, and guide social interaction. Drawing from Damasio's somatic marker hypothesis, bodily states can be understood as somatic markers: affective cues that precede conscious reasoning and shape perception, attention, and decision-making [2].

Despite the centrality of these embodied cues, interactive systems for reflection typically privilege explicit narration, inviting participants to interpret their experiences only after they have been articulated in words. Even when bodily signals are incorporated, they are frequently treated as metrics for classification, reduced to predefined emotional categories that flatten the ambiguity and temporal richness of somatic experience. We argue that embodied signals should instead be treated as meaningful interaction material: not as diagnostics, but as cues for attunement, pacing, and care.

In this paper, we present the Portable Somatic Wearable, an interactive demo that enables conference attendees to engage in a short guided AI voice reflection while gaze-derived somatic markers shape the conversation in real time. The system captures micro-behaviors such as hesitation, fixation dynamics, and blink rhythms, translating them into somatic markers that modulate conversational pacing and supportive prompts. By operationalizing ocular dynamics as expressive input, the pod expands AI-assisted reflection beyond language and explores how conversational agents can respond to embodied withdrawal, uncertainty, and temporal pacing.

2 Related Work

AI-mediated reflection and mental health. Recent systems explore conversational agents for journaling, guided reflection, and mental health support, leveraging large language models to scaffold self-disclosure and meaning-making [1, 5, 8, 12]. These tools often foreground coherence and narrative articulation, emphasizing interpretability and verbal self-report as primary interaction channels. While valuable, such approaches can implicitly privilege cognition over pre-linguistic affect, placing the burden of emotional description on the participant. At the same time, the rapid incorporation of LLMs across HCI has raised renewed questions about what interaction modalities are centered and what forms of lived experience remain uncaptured [9].

Affective computing from gaze and ocular dynamics. Affective computing research has shown that ocular micro-behaviors such as pupil diameter, blink frequency, and fixation duration can predict valence and arousal [10] and reflect attention and cognitive state [6]. Multimodal systems combining ocular dynamics with biosignals (e.g., EEG) further improve affect inference [14]. However, across many systems, somatic data is treated primarily as a classification problem—mapped onto discrete emotional labels or scores. This collapses the ambiguity inherent in bodily expression and shifts attention from lived experience to semantic certainty.

Immersive pods and introspective interfaces. A growing body of work investigates immersive enclosures, VR-adjacent installations, and intimate interaction settings that reduce external distraction and amplify presence. Recent systems explore contemplative XR environments and inward-facing mixed reality platforms designed explicitly for self-directed reflection [11, 13]. Social VR studies further examine how guided meditation can be scaffolded through shared immersive settings, including the practices and tradeoffs involved in collective inward experience [7]. Our work builds on this lineage by combining an enclosed reflective environment with real-time gaze analysis, using pre-cognitive somatic cues not simply for sensing, but as interaction material that modulates the pacing and felt responsiveness of AI-guided conversation.

3 Context & Motivation

Building on research in immersive introspective interfaces, our work explores how AI-guided reflection can incorporate embodied and pre-cognitive cues rather than relying solely on linguistic narration. This demo is motivated by observations from our previous installation Gaze to the Stars (GTS) [4], a public participatory installation that invited participants to share personal stories through an intimate guided interaction experience. GTS examined how personal affect could be externalized and shared at civic scale by embedding anonymized narrative summaries into close-up recordings of participants’ eyes.

At the center of GTS was an installation-scale sensory pod designed to reduce distraction and support vulnerability through enclosure and a controlled audiovisual atmosphere (see Figure ). Inside the pod, participants engaged in a short guided voice conversation with an AI facilitator while a close-up eye-facing camera recorded high-resolution eye video alongside the conversation transcript. This installation pod enabled consistent data capture across 200 participants and supported the broader GTS pipeline in which stories were anonymized, summarized, and later encoded into the eye video for public projection and interactive visualization [3].

However, GTS revealed two limitations that motivated this work. First, because AI-mediated reflection is rapidly becoming mainstream, access, reach, and repeatability matter: the original pod required installation-scale infrastructure and specialized fabrication, limiting who could experience the system and where it could be deployed. Second, while the original pipeline captured and visualized eye-based expression as part of the artistic output, the reflective interaction itself remained primarily language-driven: the AI responded to participants’ spoken narratives ignoring their real-time somatic micro-behaviors.

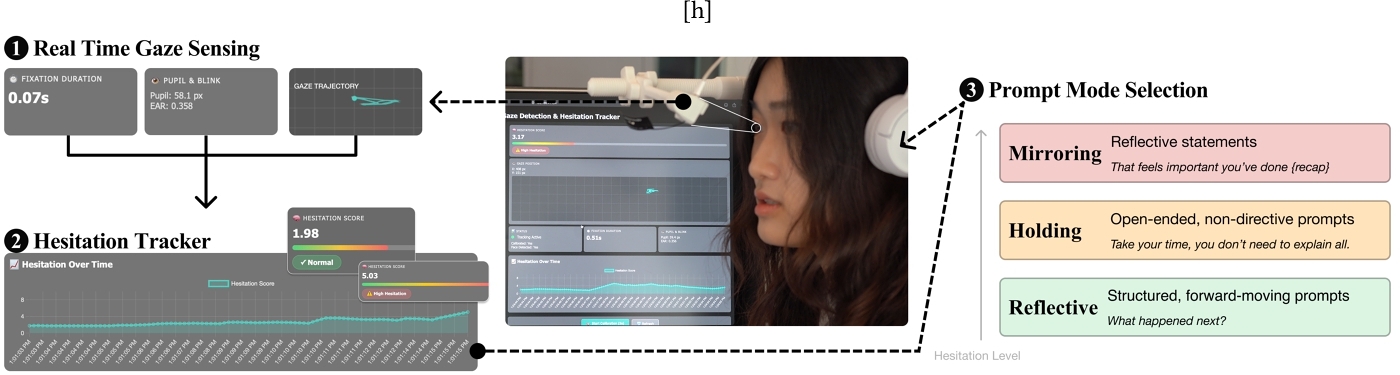

4 Wearable Interaction - Somatic Pipeline

The updated wearable interaction (see Figure 3) flow integrates real-time gaze sensing to support somatic-responsive storytelling. As users engage with the wearable, the system continuously captures subtle eye-based behaviors, like gaze stability, pupil changes, and blink frequency, to assess moments of hesitation or internal tension.

At the start of each session, the system calibrates the user's baseline for gaze and pupil metrics. From there, it interprets hesitation levels on a low–medium–high scale using a composite of gaze fixation, pupil dilation, and blink activity. Rather than interrupting or advancing the conversation linearly, the system slows down or mirrors emotional moments when hesitation increases, keeping the storytelling experience aligned with the participant's embodied cues.

This gaze-driven somatic data does not directly instruct the language model. Instead, it subtly modulates the AI facilitator's pacing and tone. When low hesitation is detected, the AI prompts narrative continuation. In medium hesitation states, it holds space with open-ended or non-directive prompts. High hesitation triggers reflective affirmations that pause the narrative flow entirely. This shift allows the system to treat embodied hesitation not as silence to be filled, but as meaningful expression to be respected.

5 Wearable Interaction - Somatic Pipeline

The updated wearable interaction(see Figure 3) flow integrates real-time gaze sensing to support somatic-responsive storytelling. As users engage with the wearable, the system continuously captures subtle eye-based behaviors, like gaze stability, pupil changes, and blink frequency, to assess moments of hesitation or internal tension.

At the start of each session, the system calibrates the user's baseline for gaze and pupil metrics. From there, it interprets hesitation levels on a low–medium–high scale using a composite of gaze fixation, pupil dilation, and blink activity. Rather than interrupting or advancing the conversation linearly, the system slows down or mirrors emotional moments when hesitation increases, keeping the storytelling experience aligned with the participant's embodied cues.

This gaze-driven somatic data does not directly instruct the language model. Instead, it subtly modulates the AI facilitator's pacing and tone. When low hesitation is detected, the AI prompts narrative continuation. In medium hesitation states, it holds space with open-ended or non-directive prompts. High hesitation triggers reflective affirmations that pause the narrative flow entirely. This shift allows the system to treat embodied hesitation not as silence to be filled, but as meaningful expression to be respected.

6 Results & Observations

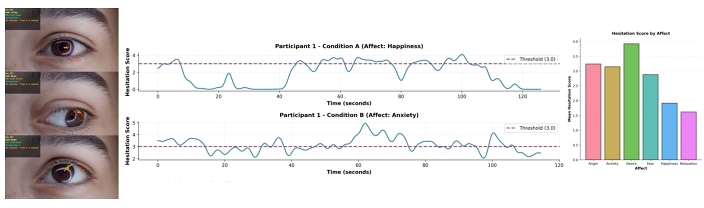

We conducted a within-subjects user study (N=7) to evaluate how gaze-based somatic sensing shaped the storytelling experience. Each participant engaged in two sessions: one with a standard text-based AI facilitator, and another using the somatic-aware pipeline. In both, participants responded to prompts designed around core affective categories, such as anger, fear, sadness, or joy.

Results showed that somatic behaviors reliably reflected emotional tone. For example, higher hesitation scores—linked to increased pupil change, longer fixations, and altered blink rhythms—were consistently observed during negative, high-arousal prompts (e.g., anxiety, anger), compared to more relaxed or joyful states(see Figure 4). These scores varied not only across conditions but also moment-to-moment within a narrative, validating the system's capacity to detect nuanced emotional shifts.

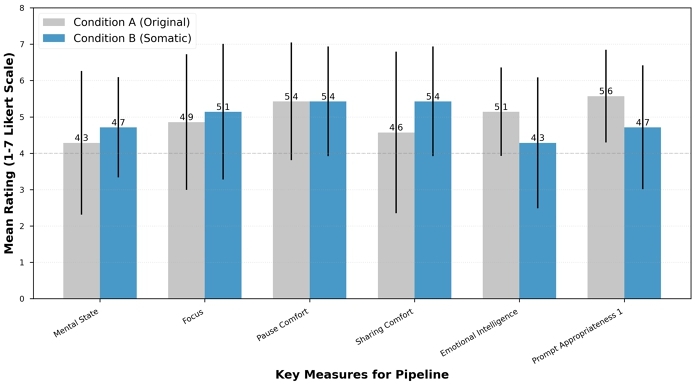

In post-study surveys, participants rated the somatic-aware version higher in perceived comfort, emotional pacing, and overall empathy. (see Figure 5) Many described the interaction as “more in tune” or “better at listening” than the baseline facilitator. Interestingly, even those who preferred more directive guidance acknowledged the value of a system that responded to moments of hesitation with space rather than interruption.

These findings support our design hypothesis: somatic markers can serve as a meaningful cue in human–AI interaction. Rather than viewing silence as breakdown, the system learns to recognize it as embodied resistance, reflection, or emotional weight. Through this, the wearable offers a gentler storytelling companion, one that listens not only to words, but to the body behind them.

7 Conclusion

Portable Somatic Wearable presents an interactive system that uses gaze-based somatic markers to shape real-time, AI-facilitated storytelling. Within a compact, portable pod, participants engage in intimate narrative sessions where micro-expressions—such as hesitation and gaze shifts—dynamically modulate the pacing and tone of the AI's responses. The system's portability allows deployment across diverse cultural and geographic contexts, supporting the collection of embodied interaction data at a global scale. By foregrounding somatic interaction as a meaningful input in narrative experiences, this work contributes to interaction design by demonstrating how bodily signals can act as co-authors in emotionally adaptive, participatory storytelling systems.

References

- Wanling Cai, Yucheng Jin, Xianglin Zhao, and Li Chen. 2023. “Listen to Music, Listen to Yourself”: Design of a Conversational Agent to Support Self-Awareness While Listening to Music. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (CHI ’23). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3544548.3581427

- Antonio R. Damasio, Daniel Tranel, and Hanna C. Damasio. 1991. Somatic Markers and the Guidance of Behaviour: Theory and Preliminary Testing. In Frontal Lobe Function and Dysfunction, Harvey S. Levin, Howard M. Eisenberg, and Arthur Lester Benton (Eds.). Oxford University Press.

- Behnaz Farahi, Sergio Mutis, Yaluo Wang, Suwan Kim, Chenyue Dai, and Haolei Zhang. 2025. Gaze to the Stars: AI and Public Art from Personal Affect and Collective Empathy. In NeurIPS 2025 Creative AI Track. NeurIPS. https://openreview.net/forum?id=XBbW9zpRJ0

- Behnaz Farahi, Haolei Zhang, Suwan Kim, Sergio Mutis, Yaluo Wang, and Chenyue Dai. 2025. Gaze to the Stars: AI, Storytelling and Public Art. In NeurIPS 2025 Creative AI Track. NeurIPS. https://openreview.net/forum?id=Eh84s4DiSC

- Taewan Kim, Donghoon Shin, Young-Ho Kim, and Hwajung Hong. 2024. DiaryMate: Understanding User Perceptions and Experience in Human-AI Collaboration for Personal Journaling. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI ’24). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3613904.3642693

- Moritz Langner, Peyman Toreini, and Alexander Mädche. 2025. Eye-Based Recognition of User Traits and States—A Systematic State-of-the-Art Review. Journal of Eye Movement Research 18, 2 (2025), 8. https://doi.org/10.3390/jemr18020008

- Lika Haizhou Liu, Xi Lu, Pei-Chun Chiang, Daniel A. Epstein, and Kurt D. Squire. 2025. Meditating Together: Practices, Benefits and Challenges of Meditation on Social Virtual Reality. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3706598.3713172

- Subigya Nepal, Arvind Pillai, William Campbell, Talie Massachi, Eunsol Soul Choi, Xuhai Xu, Joanna Kuc, Jeremy F. Huckins, Jason Holden, Colin Depp, Nicholas Jacobson, Mary P. Czerwinski, Eric Granholm, and Andrew T. Campbell. 2024. Contextual AI Journaling: Integrating LLM and Time Series Behavioral Sensing Technology to Promote Self-Reflection and Well-being using the MindScape App. In Extended Abstracts of the 2024 CHI Conference on Human Factors in Computing Systems (CHI EA ’24). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3613905.3650767

- Rock Yuren Pang, Hope Schroeder, Kynnedy Simone Smith, Solon Barocas, Ziang Xiao, Emily Tseng, and Danielle Bragg. 2025. Understanding the LLM-ification of CHI: Unpacking the Impact of LLMs at CHI through a Systematic Literature Review. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3706598.3713726

- Vasileios Skaramagkas, Emmanouil Ktistakis, Dimitrios Manousos, Eleni Kazantzaki, Nikolaos S. Tachos, Evanthia Tripoliti, Dimitrios I. Fotiadis, and Michael Tsiknakis. 2023. eSEE-d: Emotional State Estimation Based on Eye-Tracking Dataset. Brain Sciences 13, 4 (2023), 589. https://doi.org/10.3390/brainsci13040589

- Filip Škola and Fotis Liarokapis. 2024. Increasing Meditation Efficiency with Virtual Reality. In Extended Abstracts of the 2024 CHI Conference on Human Factors in Computing Systems (CHI EA ’24). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3613905.3651003

- Inhwa Song, SoHyun Park, Sachin R. Pendse, Jessica Lee Schleider, Munmun De Choudhury, and Young-Ho Kim. 2025. ExploreSelf: Fostering User-driven Exploration and Reflection on Personal Challenges with Adaptive Guidance by Large Language Models. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Association for Computing Machinery, New York, NY, USA. https://doi.org/10.1145/3706598.3713883

- Loup Vuarnesson, Richard David Nelson, and Erkin Bek. 2024. Zenbu Koko - A Mixed Reality Platform for Inward Contemplation. In SIGGRAPH Asia 2024 XR. Association for Computing Machinery, New York, NY, USA, 1–2. https://doi.org/10.1145/3681759.3688915

- Wei-Long Zheng, Bao-Ning Dong, and Bao-Liang Lu. 2014. Multimodal Emotion Recognition Using EEG and Eye Tracking Data. In Proceedings of the 36th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC). IEEE, Chicago, IL, USA, 5040–5043. https://doi.org/10.1109/EMBC.2014.6944757

Footnote

⁎These authors contributed equally to this work.

This work is licensed under a Creative Commons Attribution 4.0 International License.

CHI EA '26, Barcelona, Spain

© 2026 Copyright held by the owner/author(s).

ACM ISBN 979-8-4007-2281-3/26/04.

DOI: https://doi.org/10.1145/3772363.3799134